The future of AI-driven semiconductors is not a distant possibility but a rapidly unfolding reality, fundamentally reshaping the silicon at the heart of our digital world. These are not just faster processors; they are architecturally distinct silicon platforms engineered from the ground up to perform the complex mathematical operations underpinning artificial intelligence and machine learning with unprecedented efficiency. Consequently, this specialized hardware is the critical enabler for everything from real-time language translation and autonomous vehicle navigation to the discovery of new pharmaceuticals and the optimization of global energy grids. Understanding this evolution is essential for anyone in technology, business, or policy, as it dictates the pace and possibilities of the next technological era.

Key Takeaways

- AI-driven semiconductors are purpose-built for parallel processing, moving beyond the limitations of traditional CPU architectures.

- Key players include GPUs, TPUs, NPUs, and FPGAs, each optimized for different stages of the AI workflow.

- The shift towards heterogeneous computing and chiplet-based designs is driving performance and efficiency gains.

- Advanced packaging technologies like 2.5D and 3D integration are becoming as crucial as transistor scaling.

- Edge AI deployment is creating massive demand for low-power, high-performance inference chips.

- Global supply chain resilience and geopolitical factors are critical to the future of AI chip production.

From General-Purpose to AI-Specific: A Paradigm Shift in Silicon

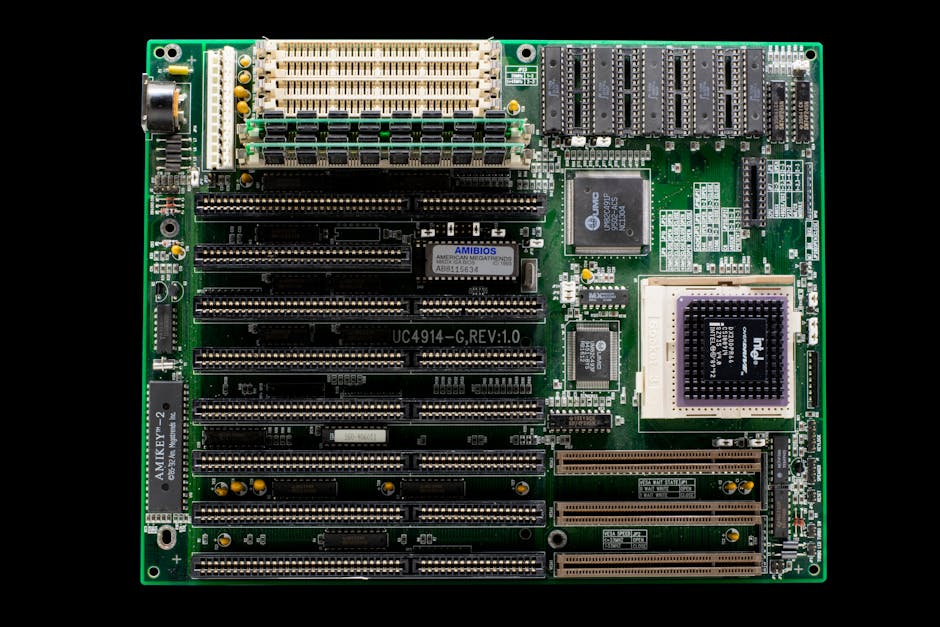

For decades, computational progress was synonymous with Moore’s Law, the observation that the number of transistors on a microchip doubles about every two years. However, this trajectory of general-purpose CPU scaling has hit physical and economic walls, failing to deliver the exponential efficiency gains required for modern AI workloads. AI-driven semiconductors represent a clean break from this legacy, embracing a domain-specific architecture (DSA) philosophy. Instead of a one-size-fits-all approach, these chips are meticulously designed to excel at the specific tensor and matrix operations that dominate neural network training and inference. This specialization allows for orders-of-magnitude improvements in performance per watt, a metric now more critical than raw clock speed.

Furthermore, the computational demands of large language models (LLMs) like GPT-4 or computer vision networks have exposed the fundamental mismatch between traditional von Neumann architectures and AI’s needs. The constant shuffling of data between separate memory and processing units creates a bottleneck known as the memory wall. AI-specific chips aggressively attack this problem through techniques like in-memory computing, wider memory buses, and massive on-chip SRAM caches. For instance, a modern AI training chip might dedicate over half its die area to memory and interconnects, a stark contrast to a general-purpose CPU. This architectural rethink is what makes real-time AI applications feasible, from filtering your photo gallery to piloting a drone.

The Rise of the Neural Processing Unit (NPU)

A prime example of this specialization is the Neural Processing Unit (NPU), now a standard component in flagship smartphones and laptops. An NPU is a microprocessor explicitly designed to accelerate neural network operations. It typically features a systolic array architecture, a grid of processing elements that efficiently performs the massive matrix multiplications at the core of deep learning. By handling these tasks, the NPU offloads work from the main CPU and GPU, leading to dramatically better battery life and faster, more private on-device AI. The proliferation of NPUs signifies that AI acceleration is no longer confined to cloud data centers but is becoming a ubiquitous, personal computing requirement.

Key Architectures Powering the AI Silicon Revolution

The landscape of AI-driven semiconductors is diverse, with different architectures optimized for various tasks within the AI pipeline. The most prominent player remains the Graphics Processing Unit (GPU), originally designed for rendering complex visuals. Its massively parallel structure, consisting of thousands of smaller, efficient cores, proved exceptionally well-suited for the parallelizable computations in AI training. Companies like NVIDIA have leveraged this advantage to establish a dominant market position with their CUDA software ecosystem and successive generations of data center GPUs. However, GPUs, while versatile, are not the most efficient solution for all AI tasks, leading to the development of more specialized alternatives.

Another major architecture is the Tensor Processing Unit (TPU), developed by Google. TPUs are application-specific integrated circuits (ASICs) built specifically for TensorFlow, Google’s machine learning framework. They are optimized for lower-precision arithmetic (e.g., 8-bit integers) commonly used in neural network inference, offering superior performance and energy efficiency for targeted workloads within Google’s vast infrastructure. Beyond GPUs and TPUs, Field-Programmable Gate Arrays (FPGAs) offer a flexible middle ground. These chips can be reconfigured after manufacturing, allowing developers to create custom hardware accelerators for specific AI models or algorithms. This makes FPGAs invaluable for prototyping new architectures and for deployment in scenarios where algorithms are still evolving.

“We are witnessing the end of the one-architecture-fits-all era. The future belongs to heterogeneous systems that integrate CPUs, GPUs, NPUs, and other accelerators, each handling the workload it does best,” notes a semiconductor analyst from Gartner.

The Critical Role of Advanced Packaging and Chiplet Design

As transistor scaling becomes more challenging and expensive, the industry’s focus has expanded from simply making transistors smaller to integrating them smarter. This is where advanced packaging and chiplet designs become paramount for the future of AI-driven semiconductors. Instead of building a single, monolithic die that contains all functions—a process prone to yield issues and physical limits—chip designers are now creating smaller, modular chiplets. These chiplets, which might separately contain CPU cores, AI accelerator arrays, and high-bandwidth memory, are then integrated onto a single package using sophisticated interconnects. This approach, often called heterogeneous integration, allows for mixing and matching the best silicon technologies for each function.

Technologies like 2.5D and 3D packaging are at the forefront of this trend. In 2.5D packaging, chiplets are placed side-by-side on a silicon interposer, a thin layer of silicon that provides extremely dense, high-speed electrical connections between them. 3D packaging takes this a step further by stacking chiplets vertically, connected by microscopic wires called through-silicon vias (TSVs). This dramatically shortens the distance data must travel, reducing latency and power consumption while increasing bandwidth—a crucial advantage for memory-hungry AI models. Companies like AMD, Intel, and NVIDIA are heavily investing in these packaging technologies, viewing them as essential for continuing performance scaling. How will these complex 3D systems be cooled and tested reliably at scale?

Driving Forces: From Cloud Giants to the Intelligent Edge

The insatiable demand from hyperscale cloud providers—Amazon Web Services, Microsoft Azure, Google Cloud, and others—is the primary engine fueling innovation in AI semiconductors. These companies operate vast data centers where AI models are trained on petabytes of data, a process requiring weeks of computation on thousands of interconnected chips. To reduce cost, latency, and dependency, they are increasingly designing their own custom AI chips, like Amazon’s Trainium and Inferentia or Google’s TPU. This trend, known as vertical integration, is forcing traditional chip vendors to innovate faster and offer more compelling, full-stack solutions that include software, libraries, and development tools.

Simultaneously, a powerful counter-trend is the explosive growth of AI at the edge. Edge AI involves running AI models directly on devices like smartphones, security cameras, sensors, and vehicles, rather than sending data to the cloud. This requires a completely different class of AI-driven semiconductors: ones that are incredibly power-efficient, low-cost, and capable of robust performance in variable environments. These edge AI chips often feature multiple specialized cores—an NPU for vision tasks, a digital signal processor for audio, a low-power CPU for control—all integrated into a single system-on-a-chip (SoC). The proliferation of the Internet of Things (IoT) and the need for real-time, privacy-preserving processing are making the edge the next major battleground for semiconductor companies.

Material Science and the Post-Silicon Frontier

While architectural innovation is delivering massive gains, fundamental improvements in transistor materials are also on the horizon. Silicon, the workhorse of the industry for over half a century, is approaching its quantum limits. Researchers are actively exploring alternative channel materials that could replace or complement silicon in future transistors. One leading candidate is gallium nitride (GaN), which offers superior electron mobility and can operate at higher voltages and temperatures, making it promising for power-efficient RF and power conversion chips that support AI infrastructure. Another is silicon carbide (SiC), crucial for managing the high-power demands of AI data centers and electric vehicles.

Looking further ahead, the field of neuromorphic computing seeks to mimic the structure and function of the human brain in silicon. Neuromorphic chips use artificial neurons and synapses to perform computation in a massively parallel, event-driven manner, which is inherently low-power. While still largely in the research phase, prototypes from companies like Intel (Loihi) have demonstrated remarkable efficiency on specific tasks like pattern recognition. Similarly, the exploration of novel physics, such as quantum computing for specific optimization problems or photonic computing for ultra-fast, low-energy linear algebra, represents the long-term frontier for AI hardware. These approaches may one day provide breakthroughs that von Neumann-based architectures cannot.

The Geopolitical and Supply Chain Landscape

The strategic importance of AI-driven semiconductors has thrust them into the center of global geopolitical competition. Nations recognize that leadership in AI is inextricably linked to leadership in advanced chip design and manufacturing. This has led to significant government initiatives, such as the U.S. CHIPS and Science Act and the European Chips Act, which provide billions in subsidies to bolster domestic semiconductor research and production. The goal is to reduce reliance on a concentrated and geopolitically sensitive supply chain, particularly for the most advanced fabrication processes located in Taiwan and South Korea.

Furthermore, export controls on advanced chipmaking equipment and the AI chips themselves have become a tool of statecraft, impacting the global flow of technology. These controls aim to limit the military and strategic AI capabilities of geopolitical rivals but also create complexity and uncertainty for the global technology industry. Companies are now forced to navigate a fragmented landscape, considering factors like supply chain resilience, geographic diversification, and technology sovereignty in their long-term planning. Building a stable and secure supply for the critical minerals, rare earth elements, and advanced manufacturing tools required for AI chips is as much an engineering challenge as a diplomatic one.

Software and the AI Silicon Ecosystem

The hardware is only half the story; the software stack that allows developers to harness these complex AI-driven semiconductors is equally critical. A chip’s success is often determined by the maturity and accessibility of its software development kits (SDKs), compilers, libraries, and frameworks. NVIDIA’s longstanding dominance is arguably as much due to its CUDA parallel computing platform and cuDNN library as to its hardware. This software “moat” locks developers into an ecosystem, creating significant switching costs. Challengers, therefore, must not only build competitive silicon but also invest heavily in creating a robust, open, and performant software environment.

Initiatives like Open Compute Project (OCP) and standardized intermediate representations (IRs) like MLIR are emerging to foster interoperability and reduce fragmentation. The ideal future is one where AI models can be compiled to run efficiently on any underlying hardware accelerator, much like how high-level programming languages can run on any CPU today. Achieving this “write once, run anywhere” dream for AI would accelerate innovation by decoupling algorithmic research from hardware constraints. Until then, the tight co-design of hardware and software—optimizing the stack from the silicon physics up to the AI framework—will remain a key competitive advantage.

Ethical and Societal Implications of Specialized AI Hardware

The rapid advancement of AI-driven semiconductors carries profound ethical and societal implications that must be proactively addressed. One major concern is the environmental footprint of training ever-larger AI models. While specialized chips are more efficient per computation, the total energy consumption of global AI infrastructure is soaring. The semiconductor industry must prioritize sustainability, exploring designs that maximize useful operations per joule and advocating for the use of renewable energy in massive data centers. Furthermore, the lifecycle of these chips, from the water-intensive fabrication process to electronic waste, requires a circular economy approach.

Another critical issue is access and equity. The high cost of designing and manufacturing cutting-edge AI chips could concentrate immense computational power—and thus the ability to develop frontier AI models—in the hands of a few corporations or nations. This could exacerbate global inequalities and limit the diversity of perspectives in AI development. Policies promoting open hardware designs, public AI research compute resources, and international collaboration are necessary to ensure the benefits of AI hardware advancements are broadly shared. As these chips enable more pervasive surveillance and autonomous systems, robust governance frameworks for their use are urgently needed to protect privacy, ensure fairness, and maintain human oversight.

Conclusion

The trajectory of AI-driven semiconductors is clear: we are moving irreversibly into an era of specialized, heterogeneous, and intelligently integrated computing platforms. The convergence of domain-specific architectures, advanced packaging, and new materials is unlocking capabilities that will power the next decade of intelligent applications, from personalized medicine to climate modeling. However, this technological revolution is inextricably bound to complex challenges in supply chain security, global competition, software development, and ethical governance.

Success in this new landscape will belong to those who can master the full stack—from transistor physics to compiler design to sustainable business models. For businesses and technologists, staying informed on these developments is no longer optional; it is essential for strategic planning and innovation. The chips being designed today will define the intelligence of tomorrow. Are you prepared to build on, or with, the foundational silicon of the AI age? The future of computing is being etched in silicon, one specialized accelerator at a time, and understanding this shift is the first step toward leveraging its transformative potential. For more on the infrastructure supporting this revolution, explore our analysis on next-generation data center architectures.