AI Content Strategy: How Military 5G Networks Blueprint Resilient AI Workflows

Enterprise leaders can now build more resilient, secure, and efficient AI content operations by applying lessons from military-grade 5G network deployments, according to a March 4, 2026, report from Telecoms Tech News. The original article details how military 5G implementations in demanding environments like NATO operations provide a proven blueprint for constructing robust private networks. For AI content creators and strategists, this translates directly to designing content automation workflows that are fault-tolerant, secure from interference, and capable of operating in resource-constrained or high-stakes commercial settings. The core insight for our industry is that resilience, once a military and telecom specialty, is now a non-negotiable requirement for any AI-driven content production pipeline that aims to be competitive and reliable.

Decoding the Military 5G Blueprint: Core Principles for Resilience

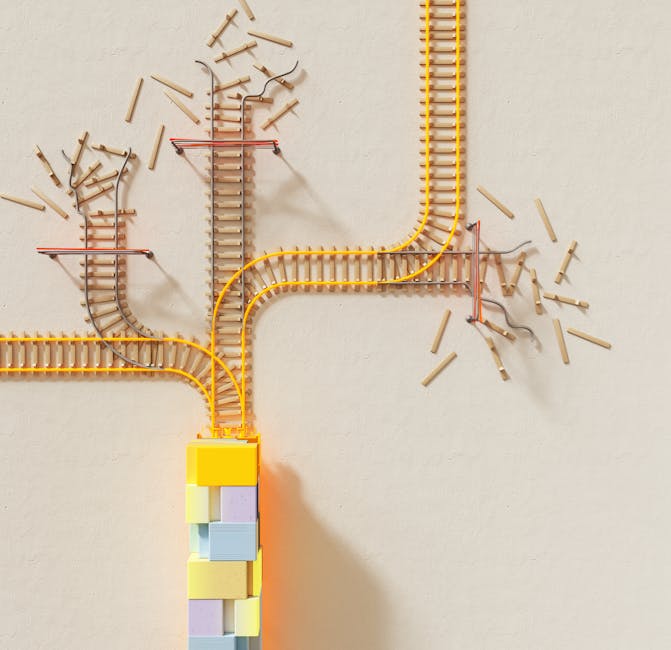

Military 5G networks are engineered for extreme conditions where failure is not an option. These deployments, often in remote or hostile territories, prioritize three fundamental principles that AI content strategists must adopt: autonomy, security-by-design, and dynamic resource allocation. Unlike commercial public networks, military systems operate as sovereign, private networks with full control over spectrum, infrastructure, and data routing. This eliminates dependency on third-party providers whose outages or policy changes could cripple a content operation. For instance, a military deployment might use a combination of fixed towers, mobile cell-on-wheels (COWs), and satellite backhaul to maintain connectivity. In AI terms, this is the equivalent of building a content workflow that isn’t reliant on a single AI model API (like OpenAI or Gemini), a single hosting provider, or a single data pipeline. The blueprint calls for a multi-vendor, hybrid architecture where if one component fails, others automatically take over without dropping a single content generation task.

Security in these networks is not a bolt-on feature but is woven into the fabric of the architecture. This includes zero-trust network access (ZTNA), end-to-end encryption, and advanced threat detection that scrutinizes every data packet. For an AI content team, this means treating every piece of content—from the initial prompt to the final published article—as a potential attack vector. It necessitates encrypting training data, securing API keys with enterprise-grade secret managers, and implementing strict access controls for your AI content platforms like EasyAuthor.ai. The military’s use of “network slicing” is particularly instructive. This technology carves a single physical network into multiple, isolated virtual networks. An AI studio could apply this concept by creating separate, secure slices of its workflow: one for generating client-facing content, another for internal strategy documents, and a third for testing new AI models, ensuring a breach in one cannot compromise the others.

Why AI Content Creators Must Adopt a “Resilience-First” Mindset

The volatile landscape of AI content creation in 2026 makes the military’s resilience blueprint not just beneficial, but essential. Content operations face three primary threats that mirror military challenges: single points of failure, adversarial attacks, and unpredictable resource environments. Relying solely on one Large Language Model (LLM) provider is a strategic vulnerability. When that provider’s API has an outage, experiences latency spikes, or makes a sudden policy change that breaks your automation, your entire content output grinds to a halt. Military networks plan for severed connections; AI workflows must plan for severed APIs. The solution is to architect your system with fallback models. Your primary workflow in EasyAuthor.ai might use GPT-4, but it should be configured to automatically switch to Claude 3, Gemini 2.0, or a fine-tuned open-source model like Llama 3 if the primary service fails or returns an error code.

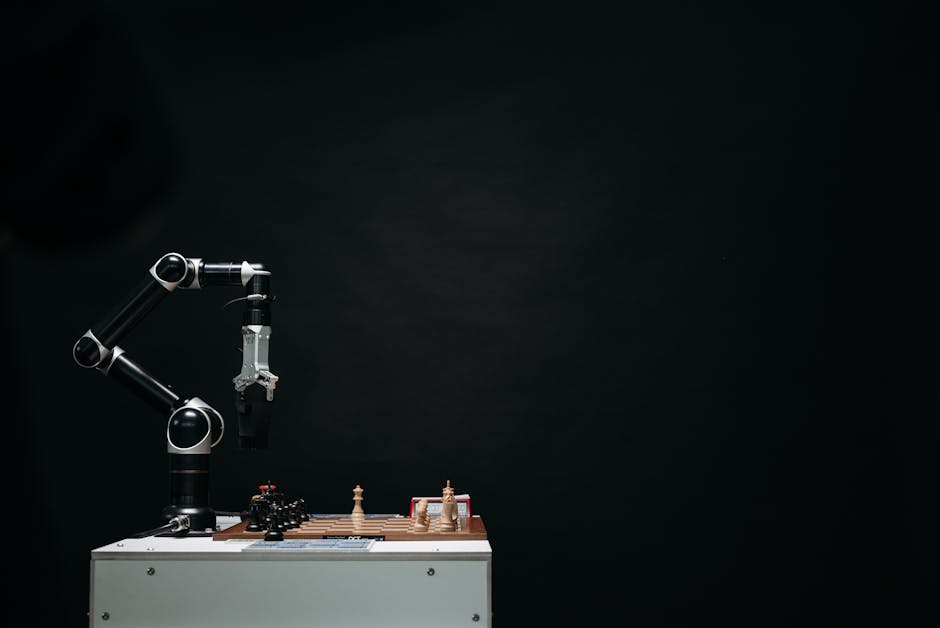

Adversarial attacks are also a growing concern. “Prompt injection” attacks, where malicious instructions hidden in source data trick an AI into generating off-brand or harmful content, are a form of digital warfare against your brand’s integrity. Military-grade security principles demand constant monitoring and validation. AI content teams need to implement automated guardrails—using secondary AI classifiers to scan generated content for toxicity, brand safety, and SEO compliance before it ever reaches a CMS. Furthermore, the “unpredictable resource environment” for creators could be a sudden Google algorithm update that demands a 50% increase in output, or a breaking news event that requires immediate topic pivots. Military networks are designed for surge capacity and rapid re-tasking. Similarly, a resilient AI content pipeline must be able to dynamically scale its generation queue, instantly re-prioritize content briefs, and redistribute tasks across available AI resources without manual intervention.

Practical Implementation: Building Your Resilient AI Content Workflow

Translating military network theory into practice requires specific tools and configurations. Here is a actionable, step-by-step guide to hardening your AI content operation:

1. Architect for Redundancy (The Multi-Model Strategy): Do not build your automation around a single AI API. Use a platform or middleware that supports multiple LLMs. Configure your workflows with a primary and secondary model. For example, set your main blog article generator to use Anthropic’s Claude 3.5 Sonnet for its reasoning, but program your workflow in Make.com or n8n to automatically retry with OpenAI’s o1-preview if Claude’s API call times out. Store your brand voice and guidelines in a vector database that can be accessed consistently by any model to ensure uniformity.

2. Implement Zero-Trust Security: Treat every automation step as untrusted. Use tools like Bright Data or Apify for secure, anonymized data scraping to feed your AI, avoiding IP bans and data corruption. Store all API keys in a dedicated secrets manager like HashiCorp Vault or AWS Secrets Manager, not in plain text within your scripts. For your WordPress publishing endpoint, use application-specific passwords and restrict API access by IP address. Encrypt all intermediate content files, especially those containing unpublished strategies or proprietary data.

3. Create Network Slices for Different Content Tiers: Segment your production line. Use one “slice” or dedicated project in your AI platform for high-volume, templated content like product descriptions. Use another, more secure slice with stricter model guidelines and human-in-the-loop checkpoints for sensitive content like thought leadership or regulatory announcements. This limits blast radius from any errors or security incidents.

4. Deploy Continuous Monitoring and Auto-Healing: Just as military networks have 24/7 network operations centers (NOCs), set up monitoring for your AI workflows. Use tools like Uptime Robot or Better Stack to monitor the health of your API endpoints. Integrate a service like Google’s Perspective API or a custom classifier to automatically flag AI-generated content that deviates from safety standards. Build your automation so that flagged content is routed to a human editor queue for review, while clean content proceeds to publishing.

5. Plan for Offline & Low-Resource Contingencies: Have a fallback plan for when cloud connectivity or AI services are degraded. This could mean maintaining a local, quantized version of a capable open-source model (e.g., a 7B parameter model running via Ollama on a local server) that can handle essential content tasks. Ensure your content briefs and templates are stored redundantly in a format that can be accessed and edited manually if needed.

The era of fragile, single-point-of-failure AI content pipelines is ending. The rigorous, battle-tested strategies emerging from military 5G deployments provide a clear roadmap for the next generation of content automation. By 2027, the defining characteristic of successful AI content studios will not be the raw volume of output, but the resilience, security, and adaptability of their production systems. Forward-thinking creators will stop asking “which AI writes the best blog post?” and start asking “how do I build a content network that never goes down, never gets hacked, and can adapt to any challenge?” The principles of autonomy, layered security, and dynamic resource management are your new strategic imperatives. Implementing this blueprint today is what will separate the market leaders from the disrupted in the rapidly evolving landscape of AI-powered content.